As organizations collect ever-increasing volumes of sensitive data across devices, regions, and business units, traditional centralized machine learning approaches are becoming harder to justify. Regulations, privacy concerns, and data sovereignty laws often prevent raw data from being moved to a single location. This challenge has fueled interest in federated learning platforms like Flower, which enable collaborative model training without centralizing data.

TLDR: Federated learning platforms such as Flower allow organizations to train machine learning models across distributed data sources without moving raw data. Instead of sharing datasets, participants share model updates, improving privacy, security, and regulatory compliance. These platforms are especially useful in healthcare, finance, IoT, and edge computing environments. Flower stands out for its flexibility, framework support, and production-ready design.

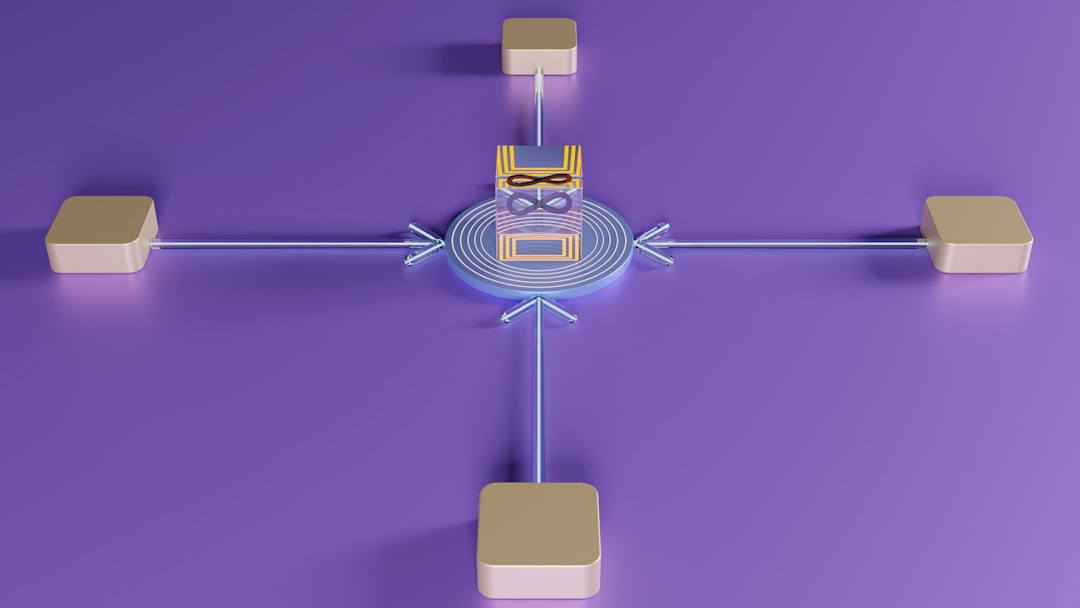

Federated learning is a distributed machine learning approach where multiple clients (devices or servers) collaboratively train a shared model under the orchestration of a central coordinator. Instead of aggregating data into one centralized repository, the algorithm moves to the data. Each participant computes updates locally and sends only model parameters or gradients back to a coordinating server.

Image not found in postmetaWhy Federated Learning Is Gaining Momentum

Traditional machine learning pipelines rely on centralized datasets. However, modern data ecosystems present challenges such as:

- Data privacy regulations (GDPR, HIPAA, CCPA)

- Data residency requirements across countries

- Bandwidth limitations in edge environments

- Security risks associated with large centralized data repositories

Federated learning addresses these issues by keeping data local. Only model parameters—often encrypted or combined with privacy-preserving methods like differential privacy—are shared.

This shift enables organizations to:

- Comply with regulations without sacrificing AI capabilities

- Leverage data from multiple silos

- Reduce exposure to large-scale data breaches

- Train models on edge devices in real time

How Federated Learning Works

The federated learning process generally follows an iterative lifecycle:

- Initialize Model: A global model is created and distributed to participating nodes.

- Local Training: Each client updates the model using its own private dataset.

- Send Updates: Only model updates (not raw data) are sent to the server.

- Aggregation: The server aggregates updates (e.g., using Federated Averaging).

- Redistribution: The updated global model is redistributed for further training rounds.

This cycle repeats until convergence or acceptable performance is achieved.

Introducing Flower: A Flexible Federated Learning Framework

Flower (short for “FLwr”) is one of the most widely adopted open-source federated learning frameworks. Designed with flexibility and scalability in mind, Flower enables researchers and enterprises to build customized federated learning systems without locking them into a specific machine learning library.

Unlike some tightly integrated frameworks, Flower supports:

- PyTorch

- TensorFlow

- scikit-learn

- Hugging Face Transformers

- Custom machine learning pipelines

This framework-agnostic design allows organizations to integrate Flower into existing ML stacks rather than rebuilding workflows.

Key Features of Flower

1. Framework Agnosticism

Flower does not impose a specific ML stack. Teams can use their preferred tools, accelerating experimentation and production deployment.

2. Scalable Architecture

Flower supports simulations with thousands of virtual clients as well as real-world deployments across distributed infrastructure.

3. Customizable Federated Strategies

Beyond standard Federated Averaging (FedAvg), users can implement custom aggregation strategies, making it suitable for research environments.

4. Production-Ready Deployment

Flower provides tools for secure communication, monitoring, and orchestration, enabling enterprise adoption.

5. Privacy and Security Integration

Developers can integrate techniques such as:

- Differential privacy

- Secure aggregation

- Encryption protocols

- Authentication layers

Other Federated Learning Platforms

While Flower is popular, it operates within a broader ecosystem of federated learning platforms. Key alternatives include:

- TensorFlow Federated (TFF) – Developed by Google, tightly integrated with TensorFlow.

- PySyft – Focused on privacy-preserving AI and secure multiparty computation.

- OpenFL – Designed for enterprise and healthcare use cases.

- NVIDIA FLARE – Optimized for large-scale, high-performance federated workloads.

Comparison of Popular Federated Learning Platforms

| Platform | Primary Strength | Framework Support | Enterprise Ready | Best For |

|---|---|---|---|---|

| Flower | Flexibility and scalability | Multiple (PyTorch, TF, etc.) | Yes | Research and production |

| TensorFlow Federated | Tight TF integration | TensorFlow only | Medium | TensorFlow users |

| PySyft | Privacy research | PyTorch-focused | Moderate | Academic privacy projects |

| OpenFL | Healthcare collaboration | Multiple | Yes | Regulated industries |

| NVIDIA FLARE | High performance | Multiple | Yes | Large-scale enterprise AI |

Real-World Use Cases

Healthcare

Hospitals cannot freely share patient data due to strict privacy regulations. Federated learning allows them to collaboratively train diagnostic models without exposing sensitive records.

Financial Services

Banks can jointly train fraud detection models while keeping transaction data internal. This improves detection accuracy without violating competition or privacy rules.

Edge and IoT Devices

Smartphones, wearables, and industrial sensors continuously generate data. Rather than transmitting sensitive user data to the cloud, local models improve collectively through federated updates.

Benefits of Federated Learning Platforms Like Flower

- Enhanced Privacy: Data remains local.

- Regulatory Compliance: Easier adherence to strict legal frameworks.

- Reduced Data Transfer Costs: Only model updates are transmitted.

- Improved Security: Lower risk of large-scale data exposure.

- Cross-Silo Collaboration: Organizations can collaborate despite competitive or geographic barriers.

Challenges and Considerations

Despite its advantages, federated learning presents several challenges:

- Statistical Heterogeneity: Data distributions may differ significantly across clients.

- System Heterogeneity: Devices have varying compute power and connectivity.

- Communication Overhead: Frequent round-trip updates can add latency.

- Model Drift: Local biases may influence global performance.

Platforms like Flower mitigate these issues with customizable strategies and robust orchestration tools. However, organizations must carefully design their architectures for optimal efficiency.

The Future of Federated Learning

Federated learning is increasingly intersecting with other privacy-enhancing technologies, including:

- Homomorphic encryption

- Secure enclaves

- Differential privacy at scale

- Blockchain-based coordination mechanisms

As AI adoption expands into regulated industries and edge environments, federated learning platforms will likely become core infrastructure components. Flower’s modularity and extensibility position it as a long-term player in both research and enterprise settings.

In a world where data is abundant but restricted, federated learning enables organizations to unlock value while maintaining trust. Platforms like Flower exemplify how modern AI systems can be collaborative without being centralized.

Frequently Asked Questions (FAQ)

1. What is federated learning in simple terms?

Federated learning is a method of training machine learning models across multiple devices or servers without moving the data to a central location. Instead, only model updates are shared and aggregated.

2. How does Flower differ from TensorFlow Federated?

Flower is framework-agnostic and works with multiple ML libraries, while TensorFlow Federated is tightly integrated with TensorFlow and best suited for TensorFlow-centric workflows.

3. Is federated learning completely secure?

Federated learning improves privacy by keeping data local, but additional techniques like encryption and differential privacy are recommended for stronger guarantees.

4. Can federated learning be used in production environments?

Yes. Platforms like Flower and NVIDIA FLARE are designed for production use, offering secure communication, monitoring, and scalable coordination.

5. What industries benefit most from federated learning?

Healthcare, finance, telecommunications, manufacturing, and edge device ecosystems benefit significantly due to strict data security and regulatory requirements.

6. Does federated learning reduce model quality?

Not necessarily. While data heterogeneity can affect performance, properly configured aggregation strategies often yield models comparable to centralized training—sometimes even better due to broader data diversity.